The evolution of 3D vision

3D vision is at the heart of modern automation that improves industrial processes in countless ways and makes our lives easier. It helps us sort products, inspect objects in quality control applications and find defects on them, and also complete the most varied tasks faster and more efficiently than humans could ever do. Vision-guided robots are commonly used to perform dangerous tasks and handle heavy objects, so they also increase safety and eliminate the risk of injuries.

3D sensing technologies have gone a long way to deliver all these benefits that we can enjoy today – and they still move forward. From the first photograph to digital imaging, from 2D to 3D, and from 3D scanning of static objects to the capture of dynamic scenes. What’s coming next?

Together with Tomas Kovacovsky, co-founder and CTO of the Photoneo Group, we looked at the history of 3D machine vision up to the latest advancements that dominate today’s trends such as Industry 4.0. Let’s have a brief look at it.

Photography and the first technologies for image capture

Since the very beginnings of photography, people have been fascinated by the possibility to capture and record events. The first known photo picture was taken somewhere between 1826 and 1827 by the French inventor Joseph Nicéphore Niépce. While his photographic process required at least eight hours if not several days of exposure in the camera, his associate Louis Daguerre developed the first publicly announced photographic process (known as Daguerreotype) that only took minutes of exposure. The invention was introduced to the public in 1839 – a year that is generally considered the birth of practical photography.

For a long time, photography only served as a medium to record events. Because the picture processing took rather long, the analog technology was not ideal to be used for machine vision or decision-making tasks.

In 1969, William Boyle and George E. Smith from the Americal Bell Laboratories invented the CCD (charge-coupled device) sensor for recording images, which was an important milestone in the development of digital imaging. A CCD sensor captures images by converting photons to electrons – that is, it takes the light and translates it into digital data. Although CCDs could not compete with the standard film for image capture at that time, they started to be used for certain applications and the ball got rolling.

From 2D to 3D

2D sensing launched the automation era and it was the prevalent approach in the automation of the industrial sector for a long time. 2D vision is used in some simple applications even today, including the following:

- Optical character recognition (OCR) – reading of typed, handwritten, or printed texts; barcode reading

- Quality control – often used in combination with special lighting to ensure that the optical qualities of the scanned object remain the same

- Counting

- Picking of items under well-defined conditions

However, the major limitation of 2D technologies is that they cannot recognize object shapes or measure distance in the Z dimension.

2D applications require good, well-defined conditions with additional lighting, which also limits applications such as bin picking. This robotic task can be done with a 2D vision system but it is generally problematic due to the random position of objects in a bin and a large amount of information in the scene that 2D vision systems cannot handle.

People recognized the need for 3D information to be able to automate more complex tasks. They understood that humans could see their surroundings in a 3D view and tell the distance of objects because they had two eyes – stereoscopic vision.

In the 1960s, Larry Roberts, who is accepted as the Father of Computer Vision, described how to derive 3D geometrical information from 2D photographs of line drawings and how a computer could create a 3D model from a single 2D photograph.

In the 1970s, a “Machine Vision” course started at MIT’s Artificial Intelligence Lab to tackle low-level machine vision tasks. Here, David Marr developed a unique approach to scene understanding through computer vision, where he treaded vision as an information processing system. His approach started with a 2D sketch, which was built upon by the computer to get a final 3D image.

Research in machine vision intensified in the 1980s and brought about new theories and concepts. These gave rise to a number of distinct 3D machine vision technologies, which have gradually been adopted in industrial and manufacturing environments to automate the widest array of processes.

First 3D vision technologies

The effort to imitate human stereoscopic vision resulted in the development of one of the first 3D sensing technologies – passive stereo. This triangulation method observes a scene from two vantage points and calculates the triangle camera – scanned object – camera, looking for correlations between the two images. Based on the disparity between the images, it calculates the distance (depth) from the scanned object. However, this approach relies on finding identical details in the images so it does not work well with white walls or scenes with no patterns. The reliability of passive stereo is small and the 3D output usually has a high noise and necessitates a lot of computing power.

To compensate for this disadvantage, researchers started to experiment with projecting light patterns onto the scene to create an artificial texture on the surface and identify correspondences in the scene easier. This method is called active stereo. Though this method is more reliable than passive stereo, the reconstruction quality is often compromised by strict requirements on processing time, which makes it insufficient for many applications.

One of the earliest and still very popular methods to acquire 3D information is laser profilometry. This technique projects a narrow band of light (or a point) onto a 3D surface, which produces a line of illumination that appears distorted from an angle other than that of the projector. This deviation encodes depth information. Line scanners capture one depth profile at a time in quick succession, for which they require the scanned object or the camera to constantly move. Laser profilometry was one of the first 3D scanning methods that was adopted for industrial use and it is still very popular in metrological applications, for instance.

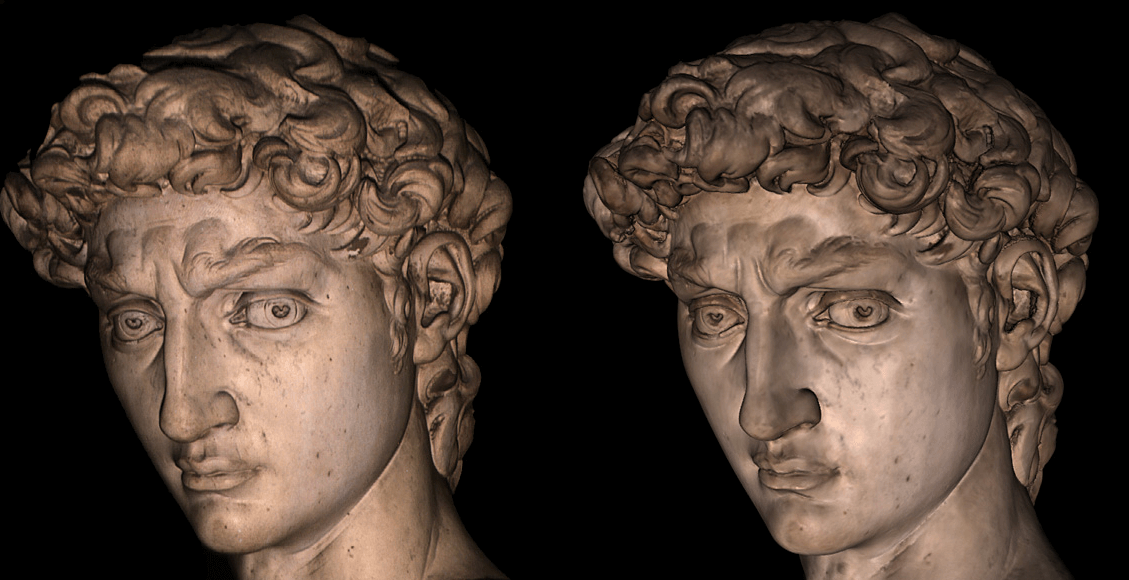

Another method invented by projecting structured light patterns onto a scene is structured light. One of the most cited works discussing the use of structured light with binary codes for digital restoration was The Digital Michelangelo Project led by Marc Levoy and his team at Stanford University. The project started in 1998 to digitalize Michelangelo’s statues with the use of a projector and a camera sensor. The laser scan data for Michelangelo’s David was then used for the statue restoration that started in 2002. Though the method used in this project was not fast enough to be used in real-time applications, it provided very high accuracy necessary for the digitalization of various artifacts and objects. Thanks to this, the technology found its niche in metrological applications and other robotic and machine vision tasks requiring high scanning precision.

Gradually, the structured light technology expanded beyond metrology and penetrated all kinds of online applications using vision-guided robots. The advantage of structured light 3D scanners is that they require no movement. Because they can make a snapshot of the whole scanning area and one does not need to go around the whole object with the scanner, they are faster than devices based on laser profilometry and do not require so much data postprocessing.

From static to dynamic scenes

The capture of movement is a lot more challenging than 3D scanning of static scenes and disqualifies methods that require longer acquisition times.

Because passive stereo is a passive method that does not use any additional lighting, it could be used for capturing dynamic scenes but only if certain conditions were met. Still though, the results would not be good.

Laser profilometry is not a lot more successful method than passive stereo in this respect. Because it captures one profile at a time, to make a whole snapshot of the scene, the camera or the scene need to move. However, the technology cannot capture a dynamic event. In order to reconstruct depth for a single profile, it requires the capture of a narrow area scan image, whereby its size limits the frame rate and consequently also the scanning speed.

Structured light systems, on the other hand, project multiple light patterns onto the scene in a sequence, one after another. For this, the scene needs to be static. If the scanned object or the camera move, the code gets broken and the 3D point cloud will be distorted.

The need to make a 3D reconstruction of dynamic objects led to the development of Time-of-Flight (ToF) systems. Similar to the structured light technology, ToF is an active method that sends light signals to the scene and then interprets the signals with the camera and its software. In contrast to structured light, ToF structures the light in time and not in space. It works on the principle of measuring the time during which a light signal emitted from the light source hits the scanned object and returns back to the sensor.

The first ToF systems had rather low quality. Big players in this field included companies such as Canesta, 3DV Systems, or Microsoft (which later acquired both companies). One of the early, well-known projects was the ZCam – a Time-of-Flight camera developed by 3DV and later purchased by Microsoft to be used for the acquisition of 3D information and interaction with virtual objects in Microsoft’s Xbox video game console.

In 2010, Microsoft released its Kinect sensor system for Xbox, a motion-sensing camera that was based on the PrimeSense technology. The PrimeSense technology used a structured pattern to encode certain pixels (not all of them) and get 3D information. Though the method could not provide high resolution and detailed contours on the edges of the scanned objects, it was widely adopted as its processing speed was rather fast and the technology was also very affordable. It has been mainly used in the academic field but it can scarcely be also found in the industrial environment for robotic picking and other tasks.

In contrast to Kinect 1, Kinect 2 was based on the ToF technology. Advancements in ToF caused that the method became increasingly popular and widely adopted – it could provide higher quality than the PrimeSense technology, yet the resolution of the 3D scans of dynamic scenes was still not sufficient.

Today’s ToF systems are quite popular in 3D vision applications thanks to their fast scanning speed and nearly real-time acquisition. However, their resolution is still an issue and they also struggle with higher noise levels.

In 2013, Photoneo came up with a revolutionary idea of how to capture fast-moving objects to get 3D information in high resolution and submillimeter accuracy.

The patented technology of Parallel Structured Light is based on a special, proprietary CMOS sensor featuring a multi-tap shutter with a mosaic pixel pattern, which fundamentally changes the way an image can be taken.

This novel snapshot approach utilizes structured light but swaps the role of the camera and the projector: While structured light systems emit multiple patterns from the projector in a sequence, the Parallel Structured Light technology sends a very simple laser sweep, without patterning, across the scene and constructs the patterns on the other side – in the CMOS sensor. All this happens in one single time instance and allows the construction of multiple virtual images within one exposure window. The result is a high-resolution and high-accuracy 3D image of moving scenes without motion artifacts.

A dynamic scene captured by the Parallel Structured Light technology.

The Parallel Structured Light technology is implemented in Photoneo’s 3D camera MotionCam-3D. The development of the camera and its release to the market marked a milestone in the history of machine vision as it redefined vision-guided robotics and expanded automation possibilities to an unprecedented degree. The novel approach was recognized with plenty of awards, including Vision Award 2018, Vision Systems Design Innovators Platinum Award 2019, inVision Top Innovations 2019, IERA Award 2020, Robotics Business Review’s RBR50 Robotics Innovation Awards 2021, inVision Top Innovations 2021, and SupplyTech Breakthrough Award 2022.

3D scanning in motion and color

In 2022 Photoneo extended the MotionCam-3D’s capabilities by equipping it with a color unit for the capture of color data. MotionCam-3D Color is considered to be the next silver bullet in machine vision as it finally enables real-time colorful 3D point cloud creation of moving scenes in perfect quality. Thanks to the unique combination of 3D geometry, motion, and color, the camera opens the door to demanding AI applications and robotic tasks that not only rely on depth information but also color data.

Real-time colorful 3D point cloud creation of a moving scene using MotionCam-3D Color.

Application areas enabled by machine vision innovations

The possibilities offered by the latest innovations in 3D machine vision allow us to automate tasks that were unfeasible until recently. These applications can be found in manufacturing, logistics, automotive, grocery, agriculture, medicine, and other sectors and include:

- Robotic handling of objects in constant or random motion

- Picking from conveyor belts and overhead conveyors

- Hand-eye manipulation

- 3D model creation for inspection and quality control

- Cleaning and painting of large objects

- Maintenance operations in VR/AR

- Sorting and harvesting in agriculture

- And many more

What’s coming next?

Machine vision continues to develop to bring new advancements with new possibilities. The direction of innovations is always influenced by market demands, customer expectations, competition, and others factors.

We can expect that the trend of deploying AI in all areas of machine vision will definitely continue with the aim to eliminate the development of tailor-made algorithms. We can see a huge potential in the area of artificial intelligence (AI) and its combination with the Parallel Structured Light technology. On the one hand, AI is dependent on good data. On the other hand, the new machine vision technology can provide a large amount of high-quality real 3D data. Combining these two approaches can transform intelligent robotics and enable a new sphere of possibilities.

Another promising direction of future developments is edge computing. Manufacturers are likely to continue their efforts to integrate AI directly into sensors and specialize them for a defined purpose (e.g. person counting, dimensioning, or automated detection of defined object features), making deployment easier for integrators and minimizing the need for additional components. New hardware solutions capable of capturing moving scenes combined with advanced AI algorithms will extend the ever-widening application fields even in more challenging areas such as collaborative robotics or complete logistics automation.