Adapting 3D Sensors for AI: Faster Time-To-Algorithm

There’s a quiet but consequential change tucked into PhoXi Control 1.17. It’s called Early Transfer, and it’s about something deceptively simple: the order in which your sensor hands you data to enable faster time to algorithm.

The Old Pipeline, and Why It Mattered Less Before

Until now, when capturing combined RGB (color and depth) data from a Photoneo 3D sensor, the pipeline followed a fixed sequence: 3D point cloud data was delivered first, with the 2D RGB texture following behind it.

For purely geometric tasks – volume measurement, presence/absence checks, precise pick-point estimation – this ordering was perfectly fine. The 3D data was the star of the show, and the color texture was a secondary layer enriching it.

But the nature of the applications running on top of 3D sensors has shifted substantially in the last few years. AI-driven pipelines have moved from the research bench to the factory floor, and they bring a different set of demands with them.

What AI Pipelines Actually Need First

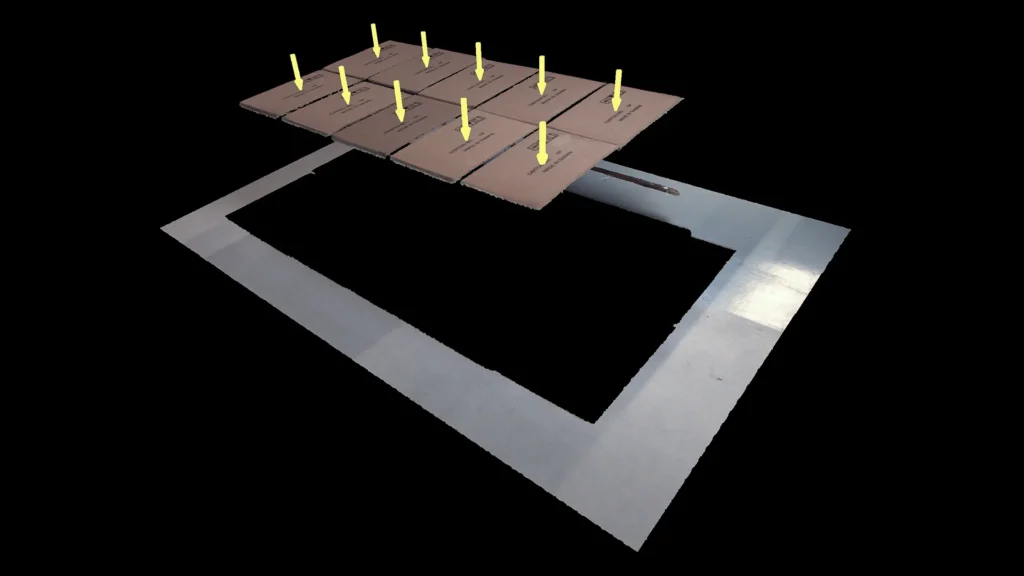

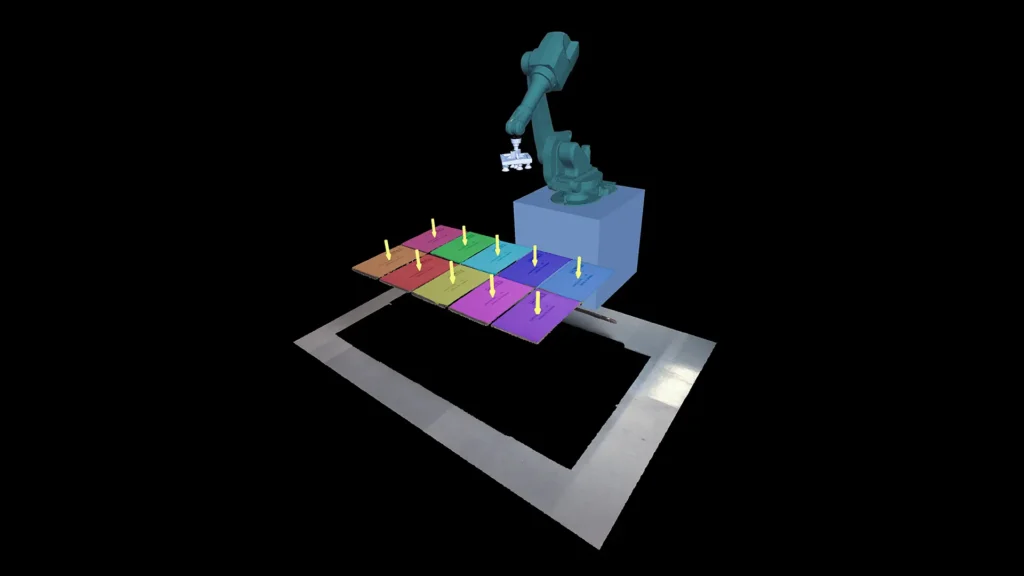

Consider a bin-picking cell processing mixed logistics items. Before any robot can be directed to a pick point, the system needs to understand what it’s looking at. That’s a job for an inference model – a neural network performing object segmentation, classification, or pose estimation. And that model doesn’t run on point clouds. It runs on images.

RGB segmentation of a mixed bin is typically the first computational step in these workflows. Only once the system knows what object it’s dealing with – its class, its orientation category, its region of interest in the frame – does it make sense to extract the corresponding 3D geometry for precise grasp planning.

The problem with the old pipeline was structural: the RGB texture arrived after the 3D data, meaning the AI inference step couldn’t begin until both data streams had been fully transferred. The system was waiting for data it wouldn’t use first, before getting the data it needed immediately.

Depending on scene complexity and network conditions, this added latency could range from a few milliseconds to several hundred – not catastrophic in isolation, but compounding across every cycle in a high-throughput line.

How This Improvement Restructures the Sequence

Early Transfer addresses this by making the pipeline order configurable. Users can now instruct the sensor to prioritize delivering the RGB texture first, immediately upon capture, before the full 3D point cloud transfer is complete.

This unlocks genuine parallelism in the processing chain. While the RGB frame is being transferred and inference is already running on the host, the sensor continues capturing and processing the depth data. By the time the AI model has produced its segmentation map and identified the target object, the 3D geometry for that region is arriving – or already there.

The two most time-consuming steps in the pipeline – AI inference and 3D data transfer – now overlap rather than queue sequentially. The wall-clock cycle time drops accordingly.

Who This Is Built For

Early Transfer is most impactful for operations that meet two criteria simultaneously:

- RGB-first inference dependency. If your pipeline makes a meaningful decision based on 2D appearance data before it requires 3D geometry – segmentation-guided pick planning, color-based sorting, class-conditional inspection logic – then the RGB frame is genuinely on the critical path, and getting it earlier has direct cycle time value.

- Throughput-sensitive environments. In low-volume or non-time-critical settings, the difference may be imperceptible. In high-throughput logistics, packaging, or e-commerce fulfillment, even reductions of 50–200ms per cycle translate to measurable OEE improvements at scale.

It’s also worth noting what Early Transfer is not: it doesn’t accelerate the scan itself, doesn’t reduce 3D processing time, and doesn’t change the total volume of data being transferred. It’s a pipeline scheduling optimization, not a hardware or algorithm upgrade. The efficiency gain is real, but it comes from removing artificial wait time – not from doing less work. True benefit comes from faster time to algorithm and downstreaming for your AI pipeline.

The Broader Shift This Represents

AI inference is no longer an optional layer added on top of classical machine vision. For a growing class of applications, it’s the first, load-bearing step. Engineering the data pipeline to reflect that reality is exactly the kind of integration-layer work that separates a sensor that’s technically capable from one that’s operationally fluid in a modern cell.

For teams designing or redesigning AI-assisted pick-and-place, inspection, or logistics workflows on Photoneo hardware, Early Transfer is worth building around.

Available Now

PhoXi Control & Firmware 1.17 is available for download. Updated documentation, API references, and working code examples for all supported languages are published at github.com/photoneo-3d, documentation can be found here – docs.photoneo.com.